What Just Happened

Anthropic quietly dropped one of the biggest pricing changes in AI this year. The 1 million token context window for Claude Opus 4.6 and Sonnet 4.6 is now generally available at standard pricing. No surcharge. No premium. No beta header required. A 900,000 token request now costs the exact same per token as a 9,000 token one. And NVIDIA GTC — the biggest AI hardware event of the year — kicks off Monday with Jensen Huang's keynote. Two stories today. Both of them have serious implications for where AI is heading next.

Anthropic’s Announcement Via Their Website

ARTIFICIAL INTELLIGENCE

🌎 Anthropic Just Made Its Most Powerful Feature Free

Until today, using Claude at full 1 million token context cost you double. Anthropic charged a 2x surcharge on input tokens and a 1.5x surcharge on output tokens for any request exceeding 200,000 tokens. That premium is now gone entirely.

Opus 4.6 still costs $5 per million input tokens and $25 per million output tokens. Sonnet 4.6 is $3 input and $15 output. But whether your prompt is 9,000 tokens or 900,000 tokens the price per token is exactly the same. No multiplier. No penalty. No extra configuration needed.

Here is what 1 million tokens actually means in practice. It is roughly 750,000 words. That is an entire book. Months of meeting transcripts. A full codebase. Years of legal documents. All of it fed into Claude in a single request without losing coherence, without chunking, without retrieval augmented generation workarounds.

While every other model falls off a cliff at scale, Opus 4.6 holds at 78.3% recall at 1M tokens. GPT-5.4 drops to 36.6. Gemini 3.1 Pro collapses to 25.9. The gap is not close.

That chart tells the whole story. Every competitor degrades badly as context scales up. GPT-5.4 starts at 79.3% at 256K and crashes to 36.6% at 1M. Gemini 3.1 Pro starts at 71.9% at 128K and falls to 25.9% at 1M. Opus 4.6 starts at 91.9% and holds at 78.3% all the way to the top. Sonnet 4.6 does the same — dropping only to 65.1% at full context while every competitor is in freefall.

This is not just the biggest context window. It is the most reliable one at scale. And as of today it costs the same as using 1% of it.

The media limit also jumped from 100 to 600 images or PDF pages per request. Available on Claude Platform, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. Claude Code users on Max, Team, and Enterprise get it by default.

Why This Is A Bigger Deal Than A Pricing Update

Google's Gemini still charges a premium above 200,000 tokens. OpenAI's GPT-5.4 matches the 1 million token window but does not beat Claude on recall accuracy at full context length. Anthropic just made the most capable long context model in the industry also the most affordable one.

This is not a feature update. This is a competitive move. Anthropic is telling every enterprise team, every developer, every researcher who was doing mental math on long context costs to stop doing that math. The barrier is gone.

⚡ The Vibe Check: The teams that were building RAG pipelines as a workaround for context limits are going to have a very interesting weekend. A lot of architectures just got simpler overnight.

Anthropic CEO - Dario Amodei

From Our Partners

Turn AI Into Extra Income

You don’t need to be a coder to make AI work for you. Subscribe to Mindstream and get 200+ proven ideas showing how real people are using ChatGPT, Midjourney, and other tools to earn on the side.

From small wins to full-on ventures, this guide helps you turn AI skills into real results, without the overwhelm.

AI Events

Also Today: NVIDIA GTC Starts Monday 👀

Some Cool Stuff From NVIDIA

The biggest AI hardware event of the year kicks off Monday and the entire industry is watching.

NVIDIA's GTC 2026 conference starts Monday with Jensen Huang's keynote and the expectations going in are unusually high. The question everyone is asking is not whether NVIDIA will show impressive things — it is whether Jensen can convince the market that NVIDIA's lead holds as AI shifts from massive model training toward inference, orchestration, and agent-heavy workloads.

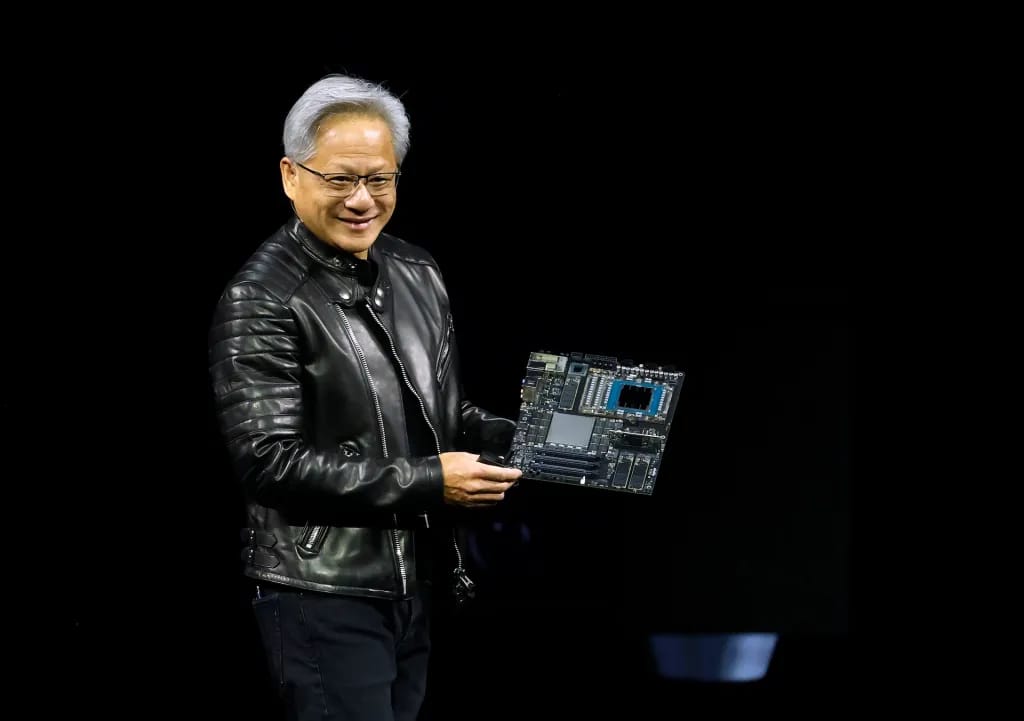

NVIDIA CEO - Jensen Huang

That shift matters because training a giant model is a one-time compute event. Running fleets of AI agents across enterprise workflows is a continuous compute event. The economics are different. The hardware requirements are different. And the companies best positioned to win that next layer are not necessarily the same ones who won the training layer.

Investors will be watching for signals on the Vera Rubin AI data center platform, demand beyond the hyperscalers, and whether NVIDIA is positioning itself as a chip company or a full AI ecosystem company. The Nemotron Super model drop this week was not a coincidence. NVIDIA showed up to GTC week already having released a frontier open source model that beats closed competitors on agentic benchmarks. That is a company that knows exactly what story it wants to tell.

GTC runs all week. Jensen's keynote is Monday. If history is any guide it will be worth watching.

Meme Of The Day 🤣

128k context and you think you're smart. 256k and you're feeling yourself. 512k and you're dangerous. 1M context for free and you genuinely start questioning if you even need a team anymore. Anthropic really said here's a million tokens, no extra charge, go crazy. And we will.

What's The Recap?

Anthropic removed the last financial barrier to using Claude at full scale and NVIDIA is about to take the stage at the biggest AI hardware event of the year. The 1M context pricing change is the kind of update that sounds technical until you realize it just made an entirely new category of applications economically viable overnight. Legal teams processing full case histories. Researchers ingesting entire literature bodies. Engineers loading complete codebases. All of them just got a price cut they were not expecting on a Friday. Go check your Claude pricing before the weekend. And tune in Monday for Jensen.

Stay building. 🤖