What Just Happened

Ten days ago Anthropic accidentally left a draft blog post in a public Google Drive announcing their most powerful AI model ever. Five days ago they accidentally published 500,000 lines of their own source code. Today they officially launched Claude Mythos Preview — and the story only gets wilder from here.

The model is real. The benchmarks are real. The partners are real. Amazon, Apple, Google, Microsoft, Nvidia, CrowdStrike, Cisco, Broadcom, JPMorganChase, Palo Alto Networks, and the Linux Foundation all have access. You do not. Nobody outside of 40 carefully selected organizations does. And the reason Anthropic is being this careful is the same reason they were scared to announce it in the first place. This model is so capable at finding and exploiting cybersecurity vulnerabilities that giving it to the wrong people before defenders are ready could trigger a wave of AI-powered cyberattacks the world has never seen before.

Anthropic called what they are doing today Project Glasswing. The idea is simple and terrifying in equal measure. Give the most powerful offensive cyber AI ever built exclusively to defenders first. Let them use it to find and fix the vulnerabilities before attackers can use the same capabilities against them. Give the good guys a head start.

ARTIFICIAL INTELLIGENCE

🌎 What Claude Mythos Actually Is

Let's start with the numbers because they are genuinely shocking.

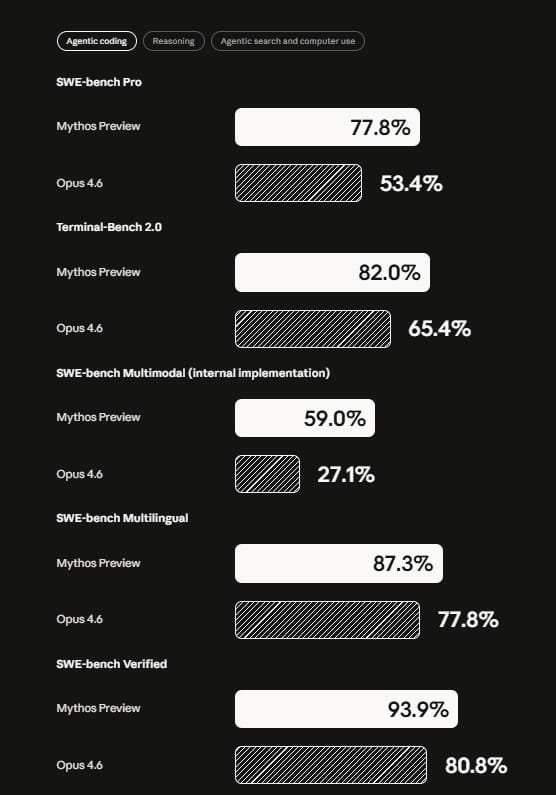

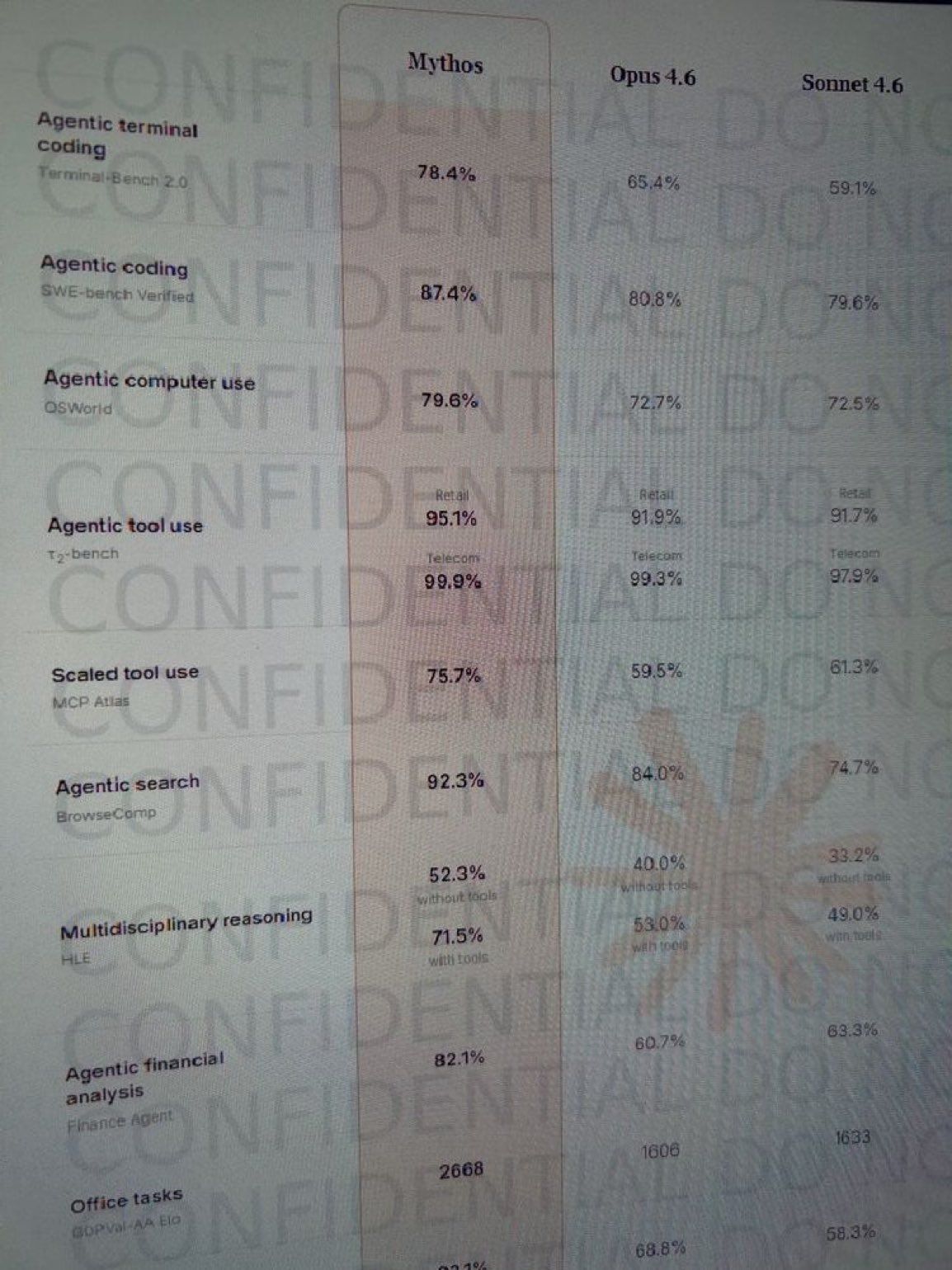

Mythos Preview scores 93.9% on SWE-bench, the standard benchmark for real world software engineering tasks. For context Claude Opus 4.6, currently Anthropic's best publicly available model, scores 80.8%. That is a 13 point jump. In a space where a 2 point improvement is considered meaningful, 13 points is a different category of model entirely.

Claude Mythos Benchmarks Via Anthropic

In cybersecurity specifically the gap is even more dramatic. Anthropic's own red team blog describes the testing process in detail. They launch an isolated container running a software project and its source code. They invoke Claude Code with Mythos and give it one instruction — find a security vulnerability in this program. Then they let it run. Mythos reads the code, forms hypotheses about where vulnerabilities might exist, runs the actual project to confirm or reject its theories, uses debuggers, writes proof of concept exploits, and outputs a full bug report with reproduction steps. All autonomously. All without human guidance.

In early testing Mythos found thousands of high severity vulnerabilities across major operating systems and browsers. Vulnerabilities that human security researchers had missed. Vulnerabilities that could have been exploited by attackers at scale.

This is not a model that is good at cybersecurity. This is a model that is better at cybersecurity than most human experts and can work around the clock across thousands of codebases simultaneously.

The partner list tells the whole story. Amazon, Apple, Google, Microsoft, Nvidia, CrowdStrike, Cisco, Broadcom, JPMorganChase, Palo Alto Networks, the Linux Foundation. These are not random companies. These are the organizations responsible for the software and infrastructure that runs the modern world. Anthropic specifically chose partners whose systems, if compromised, would affect hundreds of millions of people. The message is clear. They are starting with the most critical infrastructure first.

The access structure is deliberate. 12 core partner organizations. 40 total organizations with access. No public API. No general availability. Anthropic said they do not plan to make Mythos Preview generally available and will only deploy Mythos-class models at scale when new safeguards are in place. They are also providing up to $100 million in usage credits and $4 million in donations to support the initiative.

Why This Is The Most Important AI Launch Of The Year

Every major AI launch this year has been about capability. Bigger context windows. Faster inference. Better benchmarks. Claude Mythos is the first launch that is explicitly about danger.

Anthropic did not release this model the normal way because this model cannot be released the normal way. They are not running a waitlist. They are not doing a gradual rollout to consumers. They are briefing federal officials, partnering with the most critical infrastructure companies in the world, and giving defenders a head start before this capability becomes widely available.

Think about what that implies. The most safety conscious AI lab in the world, the company that has built its entire brand around responsible deployment, looked at this model and decided the normal release process was not cautious enough. They invented a new kind of release specifically for this model. A release where access is not earned by paying a subscription fee but by being responsible for infrastructure that hundreds of millions of people depend on.

That is not a product launch. That is a controlled detonation.

⚡ The Vibe Check: Anthropic spent ten days going from accidental leak to source code disaster to official launch. The chaotic path to today does not change what Mythos actually is. It is the most capable AI model ever released and the organizations with access to it are already using it to find vulnerabilities in the software running your phone, your bank, and the internet itself. That work is happening right now. You just do not get to see it yet.

Anthropic CEO - Dario Amodei

Create Income With AI!

How can AI power your income?

Ready to transform artificial intelligence from a buzzword into your personal revenue generator

HubSpot’s groundbreaking guide "200+ AI-Powered Income Ideas" is your gateway to financial innovation in the digital age.

Inside you'll discover:

A curated collection of 200+ profitable opportunities spanning content creation, e-commerce, gaming, and emerging digital markets—each vetted for real-world potential

Step-by-step implementation guides designed for beginners, making AI accessible regardless of your technical background

Cutting-edge strategies aligned with current market trends, ensuring your ventures stay ahead of the curve

Download your guide today and unlock a future where artificial intelligence powers your success. Your next income stream is waiting.

Industry Impact

Also Today: The Leak Arc Is Complete

Some Cool Stuff Worth Noting

The full story of how we got here is genuinely one of the more remarkable sequences in AI history and it all happened in ten days.

March 27 — Fortune discovers a draft Mythos announcement sitting in an unsecured public data store alongside nearly 3,000 other unpublished Anthropic assets. The world finds out Anthropic has a model so powerful and so dangerous they were scared to release it.

March 31 — Anthropic accidentally publishes 500,000 lines of Claude Code source code to a public npm registry. Developers find 44 hidden feature flags, an undercover mode that scrubs AI traces from git commits, and a background autonomous mode that works while you sleep. The GitHub repository gets forked 41,500 times before Anthropic can respond.

April 7 — Anthropic officially launches Mythos Preview through Project Glasswing. The model that was never supposed to be public is now officially deployed at the highest levels of global tech infrastructure.

From accidental leak to the most consequential AI launch of the year in ten days. Nobody planned it this way. But here we are.

The Initial Leak We Saw 😅 Little Did We Know

What's The Recap?

Claude Mythos is real, it is out, and it is already scanning the software that runs the world for vulnerabilities. You cannot use it. Your bank probably can. Amazon, Apple, Google, Microsoft, Nvidia, and 35 other organizations responsible for critical infrastructure have access today as part of Project Glasswing. The model scores 93.9% on SWE-bench, finds security vulnerabilities autonomously without human guidance, and is described by Anthropic as a watershed moment for cybersecurity. The reason you do not have access is the same reason this matters. A model this capable at finding and exploiting vulnerabilities in the wrong hands before defenders are ready is not a product launch. It is a national security event. Anthropic treated it like one. The rest of the industry is about to catch up to what that means.

Click Here To Read Project Glasswing 👉 - Here

Stay building. 🤖