What Just Happened

Google had a big day. They dropped Gemini Embedding 2, their first fully multimodal embedding model that maps text, images, video, audio, and documents into a single unified space. And in the same breath, they announced a sweeping Gemini-powered overhaul of Google Workspace — Docs, Sheets, Slides, and Drive. Two launches. One clear message. Google is done playing catch-up.

ARTIFICIAL INTELLIGENCE

🌎 Gemini Embedding 2: One Model To Rule All Modalities

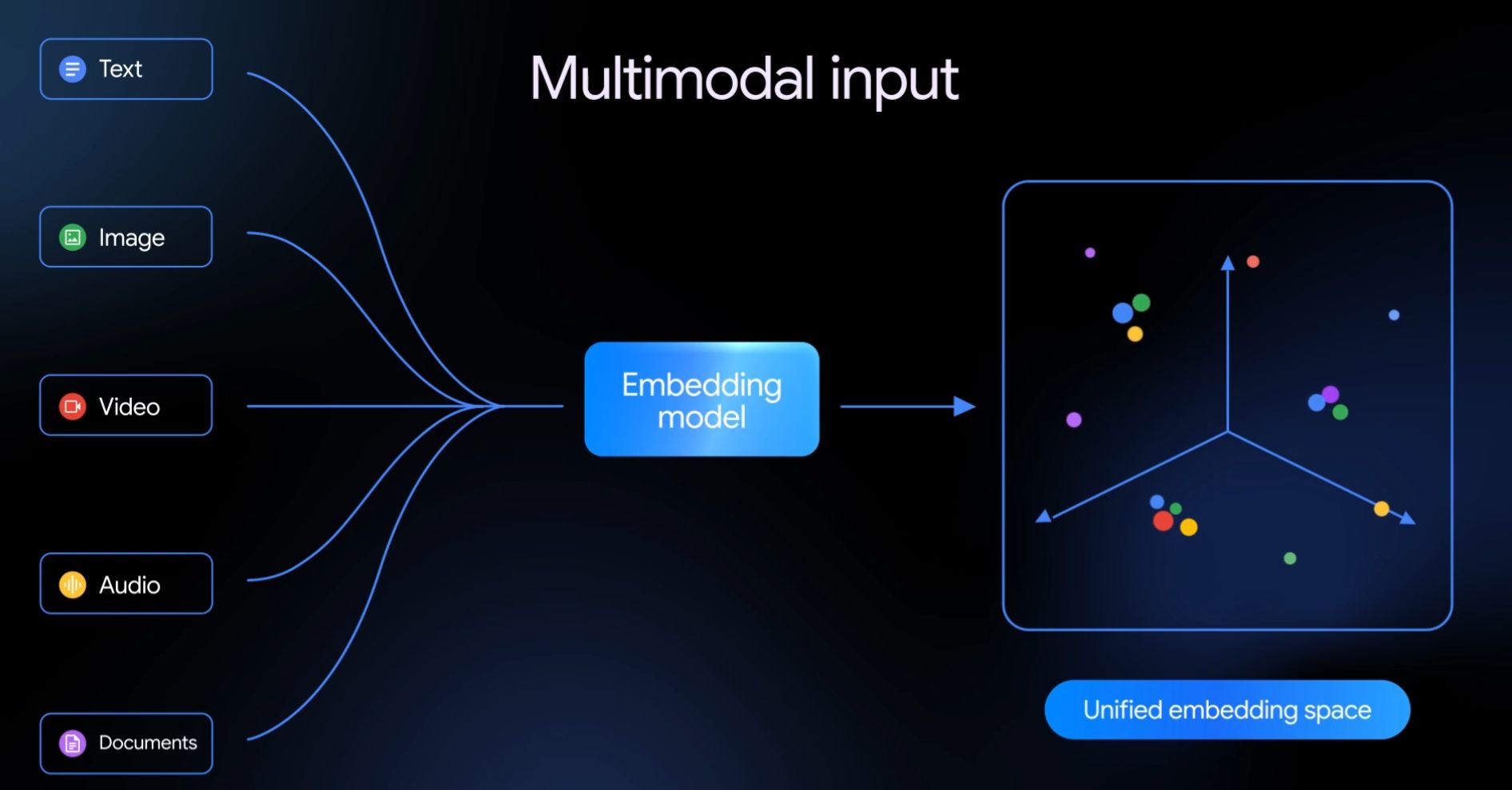

Here is what is actually new. Previous embedding models were either text-only or awkwardly stapled together across modalities. Gemini Embedding 2 treats everything: text, images, video, audio, documents — as first-class citizens in a single shared embedding space. That is a fundamentally different architecture and it shows in the numbers.

How Multimodal Input Works

Here is what the model supports out of the box:

Text handles up to 8,192 input tokens, covering long documents and complex queries without chunking headaches.

Images accepts up to 6 images per request in PNG and JPEG, letting you embed visual content directly alongside text.

Video supports up to 120 seconds of MP4 or MOV input, opening up retrieval use cases that simply did not exist before in a single model.

Audio is the sleeper feature. The model ingests audio natively without requiring transcription first. That means tone, pacing, and acoustic context survive the embedding process. No other major model does this.

Documents handles PDFs up to 6 pages directly, without preprocessing pipelines.

It also ships with Matryoshka Representation Learning, which lets you scale output dimensions down from 3,072 to 1,536 or 768 depending on how aggressively you want to manage storage costs.

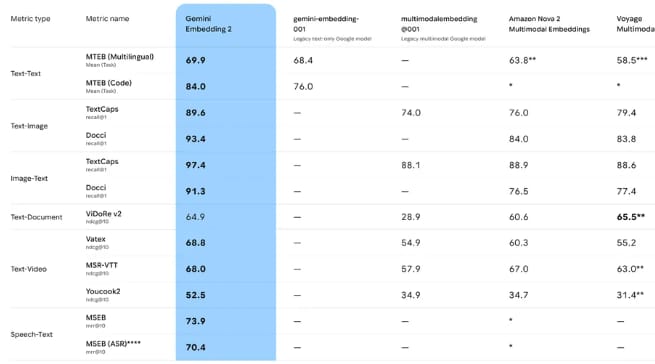

So? Why Do Benchmarks Even Matter?

This is not a case where Google is cherry-picking favorable comparisons. Gemini Embedding 2 leads across almost every category where competition exists, and runs completely uncontested in the categories where it does not.

On text tasks it scores 69.9 on MTEB Multilingual and 84.0 on MTEB Code. The previous Google text embedding model scored 76.0 on code. That is an 8 point jump from their own baseline.

On image retrieval it scores 89.6 and 93.4 on TextCaps and Docci respectively, beating Amazon Nova 2 and Voyage Multimodal 3.5 by double digits in several cases.

On video retrieval the Vatex score of 68.8 beats the next competitor by more than 13 points.

On speech tasks it scores 73.9 on MSEB. The column for every other competitor in that category is a dash. They do not support it.

Google Deepmind CEO - Demis Hassabis

⚡ The Vibe Check: The speech benchmark is the one to watch. Audio is the modality every enterprise is sitting on and nobody has properly solved for embeddings. Google just walked into that gap alone.

AI Native CRM - Free Trial!

Attio is the AI CRM for modern teams.

Connect your email and calendar, and Attio instantly builds your CRM. Every contact, every company, every conversation, all organized in one place.

Then Ask Attio anything:

Prep for meetings in seconds with full context from across your business

Know what’s happening across your entire pipeline instantly

Spot deals going sideways before they do

No more digging and no more data entry. Just answers.

The Productivity Suite Changes

Also Today: Gemini Rewrites The Workspace Experience

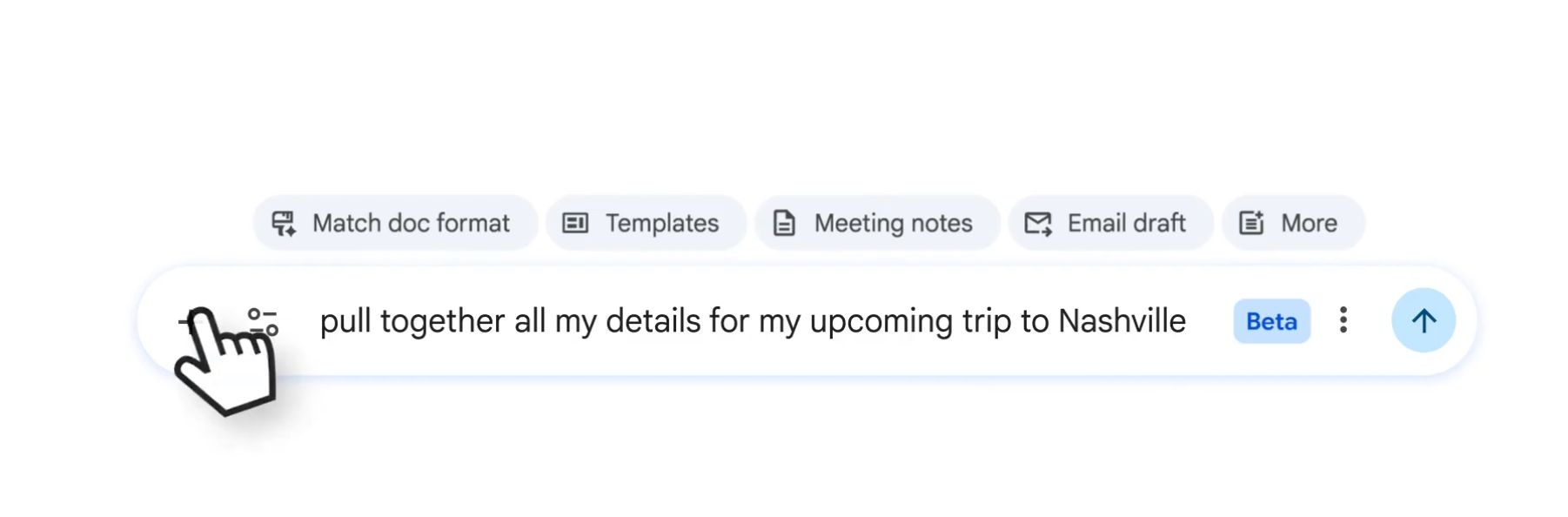

The second drop is the one that will affect more people tomorrow morning. Google is rolling out a Gemini-native overhaul of Docs, Sheets, Slides, and Drive — and it is not just a smarter sidebar.

Google Can Search Through All Your Drive

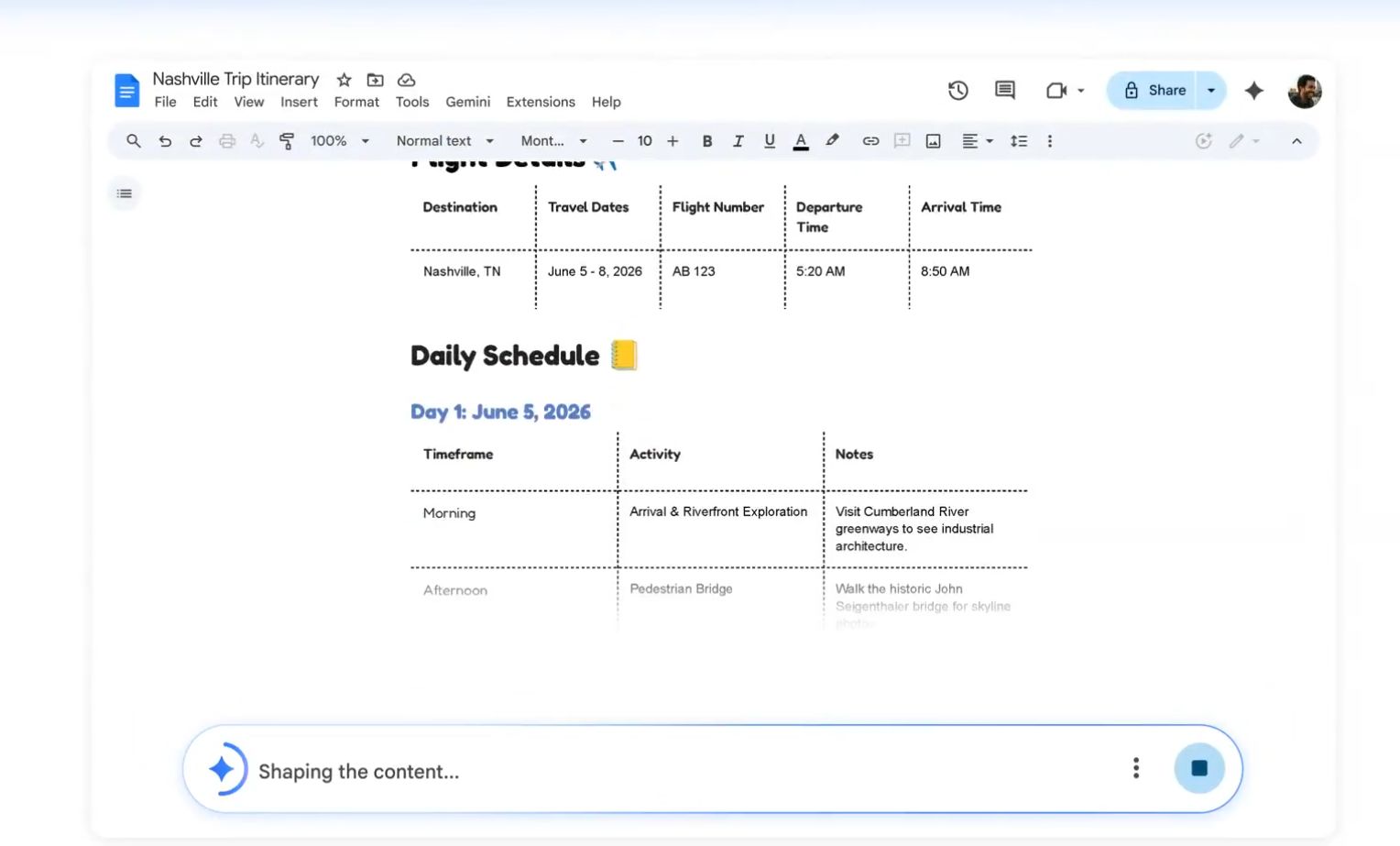

AI Overviews in Docs makes writing assistance context-aware. The model understands what your document is actually about and grounds its suggestions accordingly, rather than producing generic filler.

Talk Against Your Docs

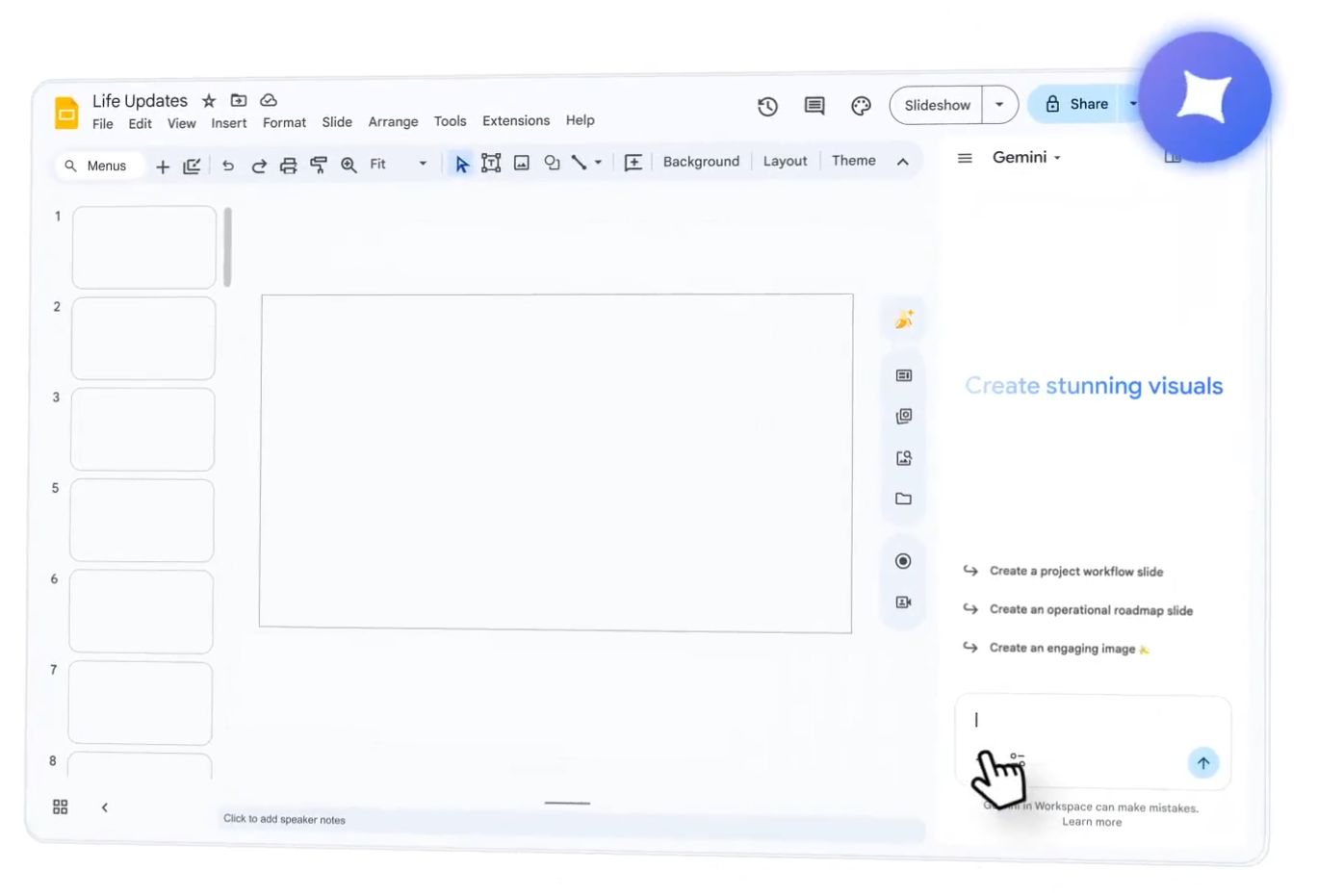

Fully editable AI-generated Slides means you can describe a presentation and get a complete deck back — not a template, not a suggestion, a real editable deck you can ship.

Create Slides With AI

New grounding sources let Docs anchor to your organization's actual content. This is the feature that stops AI from hallucinating and starts making it genuinely useful inside a real company.

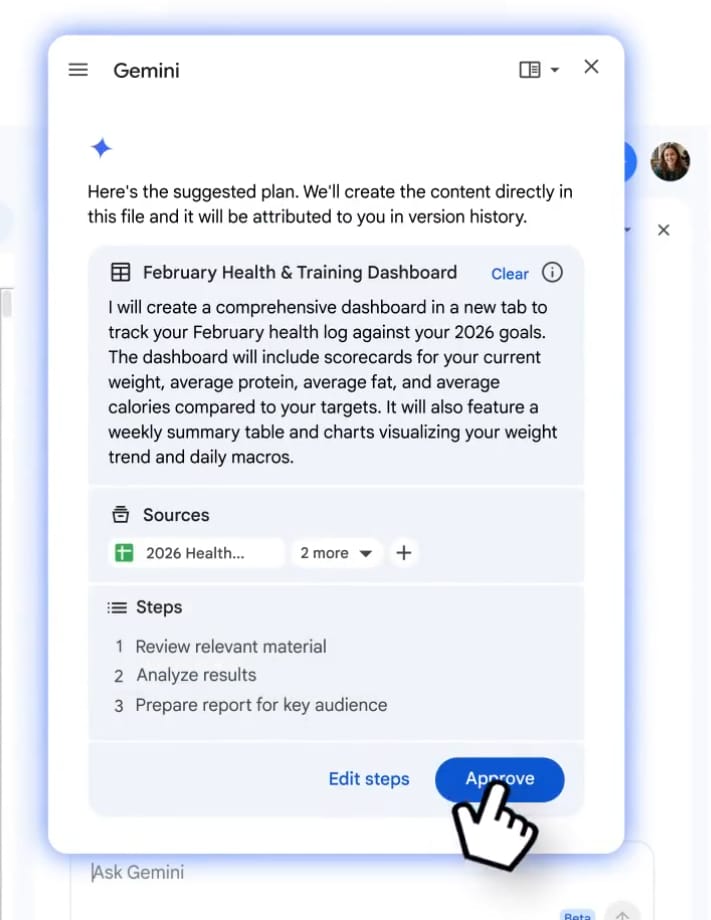

Talk Against Your Data With As Much Context As Needed

The rollout is live today for G1 Pro and Ultra users.

Why These Two Launches Are More Connected Than They Look

The timing is not a coincidence. Better embeddings are precisely what makes smarter grounding possible at scale. When Docs grounds a response in your company's content, it is using embeddings to find what is relevant. Gemini Embedding 2 makes that retrieval dramatically more accurate across every content type your organization actually uses — not just text documents, but slide decks, recorded meetings, PDFs, and more.

Google is building the infrastructure layer with Embedding 2 and surfacing the experience layer through Workspace. These are two parts of the same product strategy shipping on the same day.

It’s All Connected, or at least it sounds cooler put that way lol

What's The Recap?

Google launched the most capable multimodal embedding model on the market and rebuilt the AI experience inside the productivity suite a billion people use every day, all before lunch. The embedding model sets a new ceiling for what retrieval can look like across every modality. The Workspace update brings that capability to people who will never touch an API. If you are building anything that involves search, retrieval, or document understanding, Gemini Embedding 2 deserves a serious look. If you are a G1 Pro or Ultra user, open Docs today and see what changed. The pace of shipping in AI right now is genuinely hard to keep up with. The only thing that matters is whether you are using these tools or watching other people use them.

Google CEO - Sundar Pichai (Source: Dexerto)

Stay building. 🤖