What Just Happened

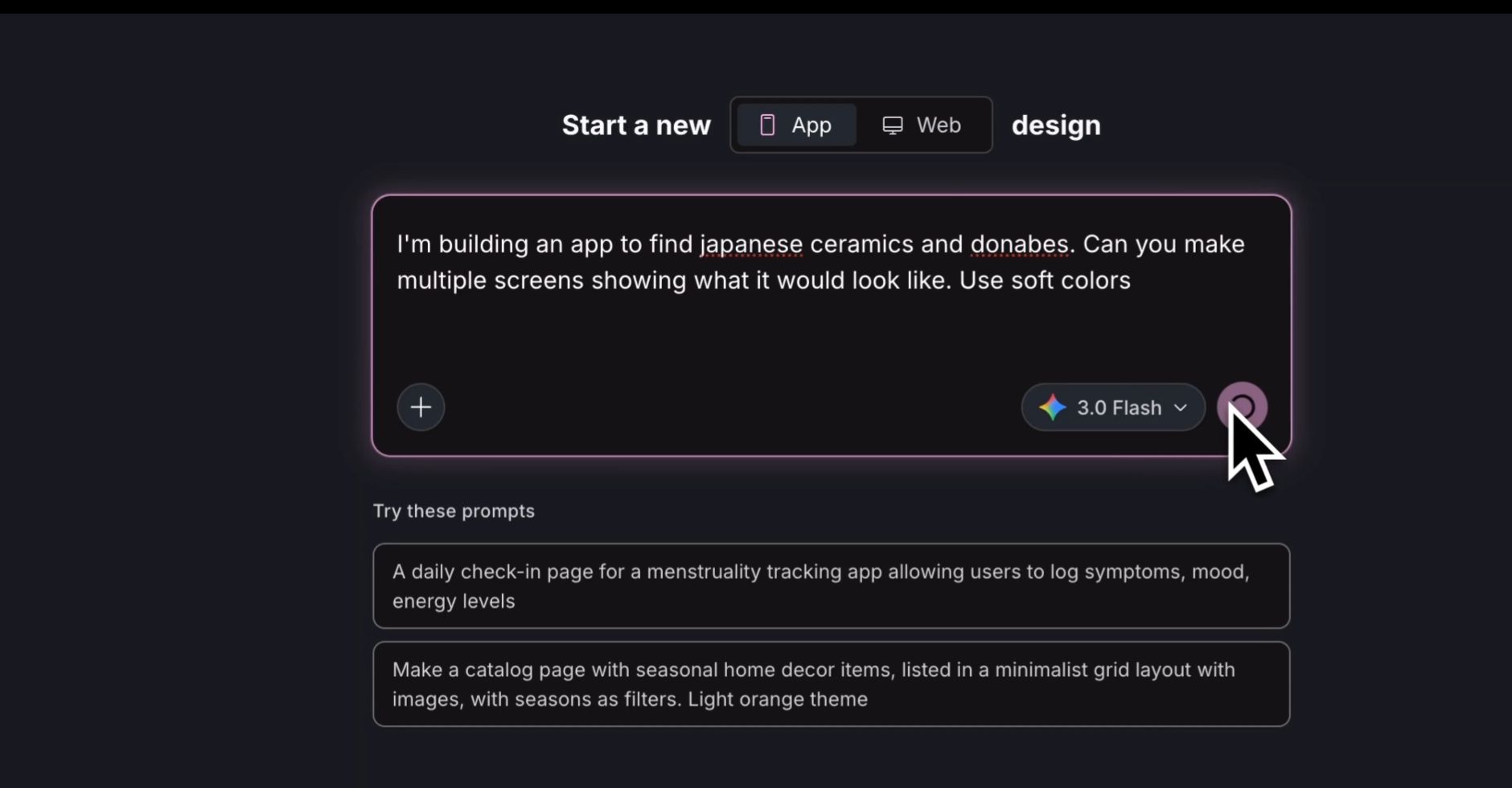

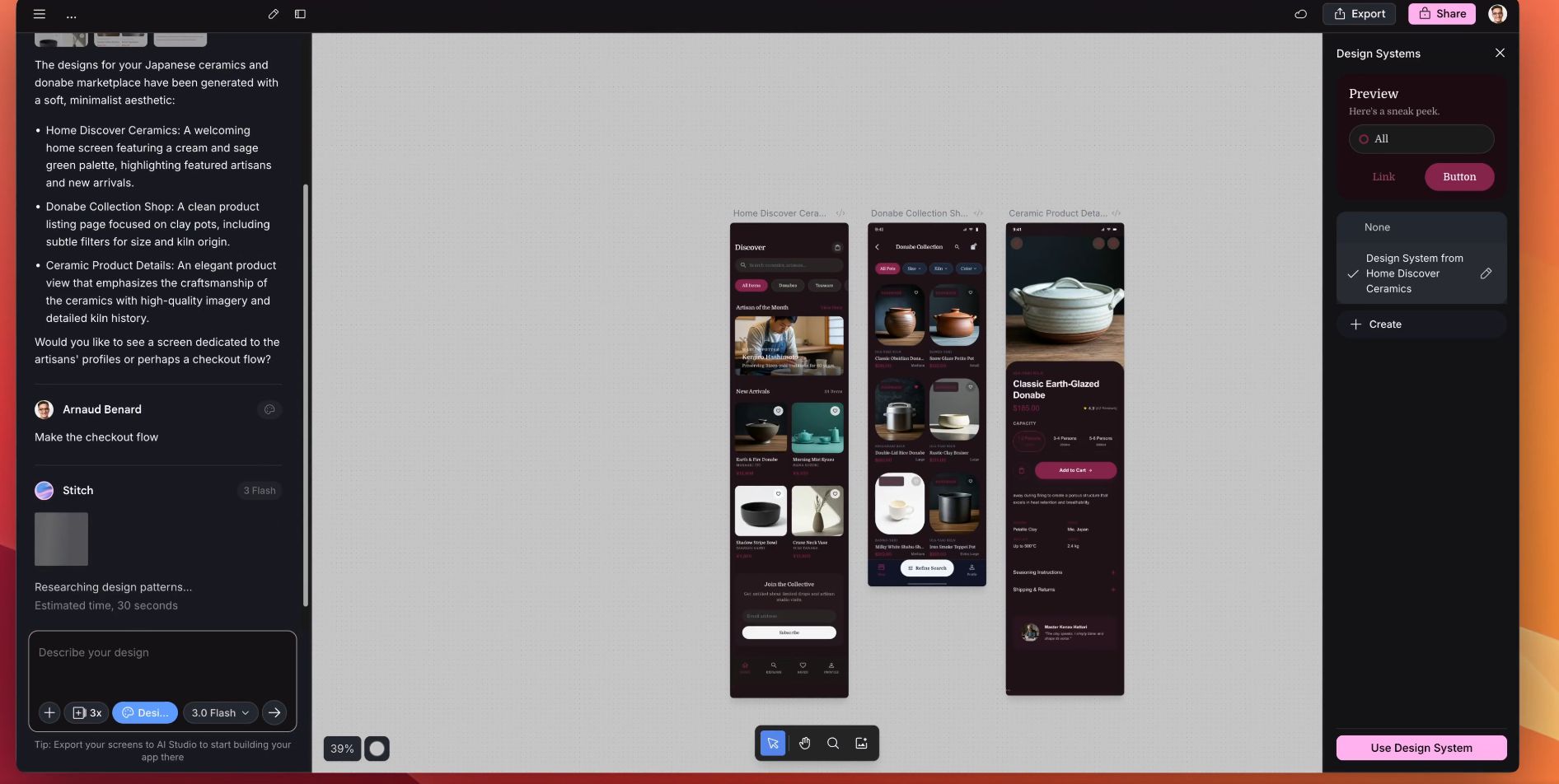

Google just completely rebuilt Stitch. What started as a simple AI mockup tool is now a full AI-native design platform with voice controls, an infinite canvas, and a built-in agent that designs alongside you.

Google Stitch Announced Today Via X

ARTIFICIAL INTELLIGENCE

Google Just Killed the Design Process

Google Labs dropped a massive update to Stitch. This isn't a small feature bump. They rebuilt the entire thing from the ground up.

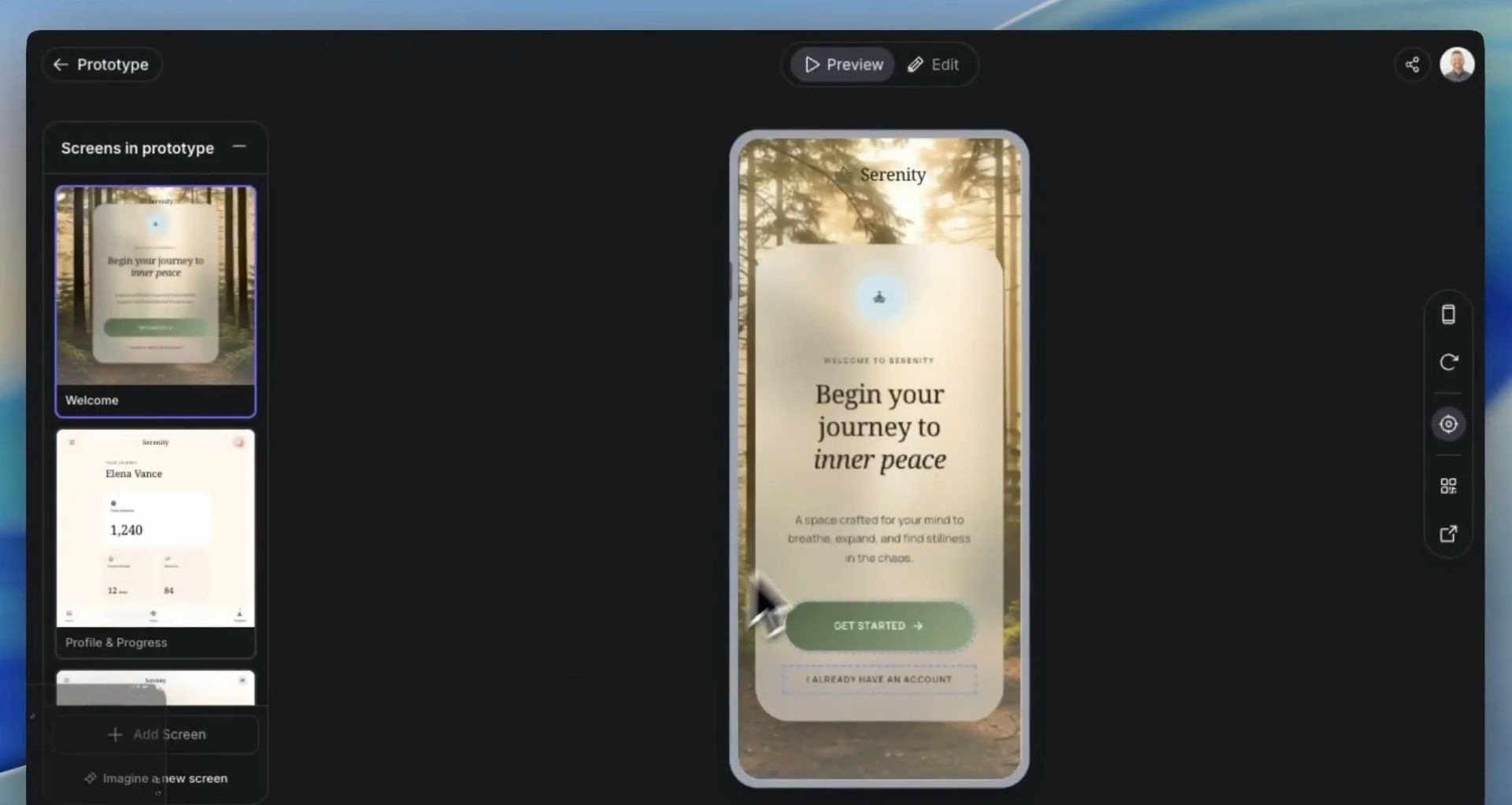

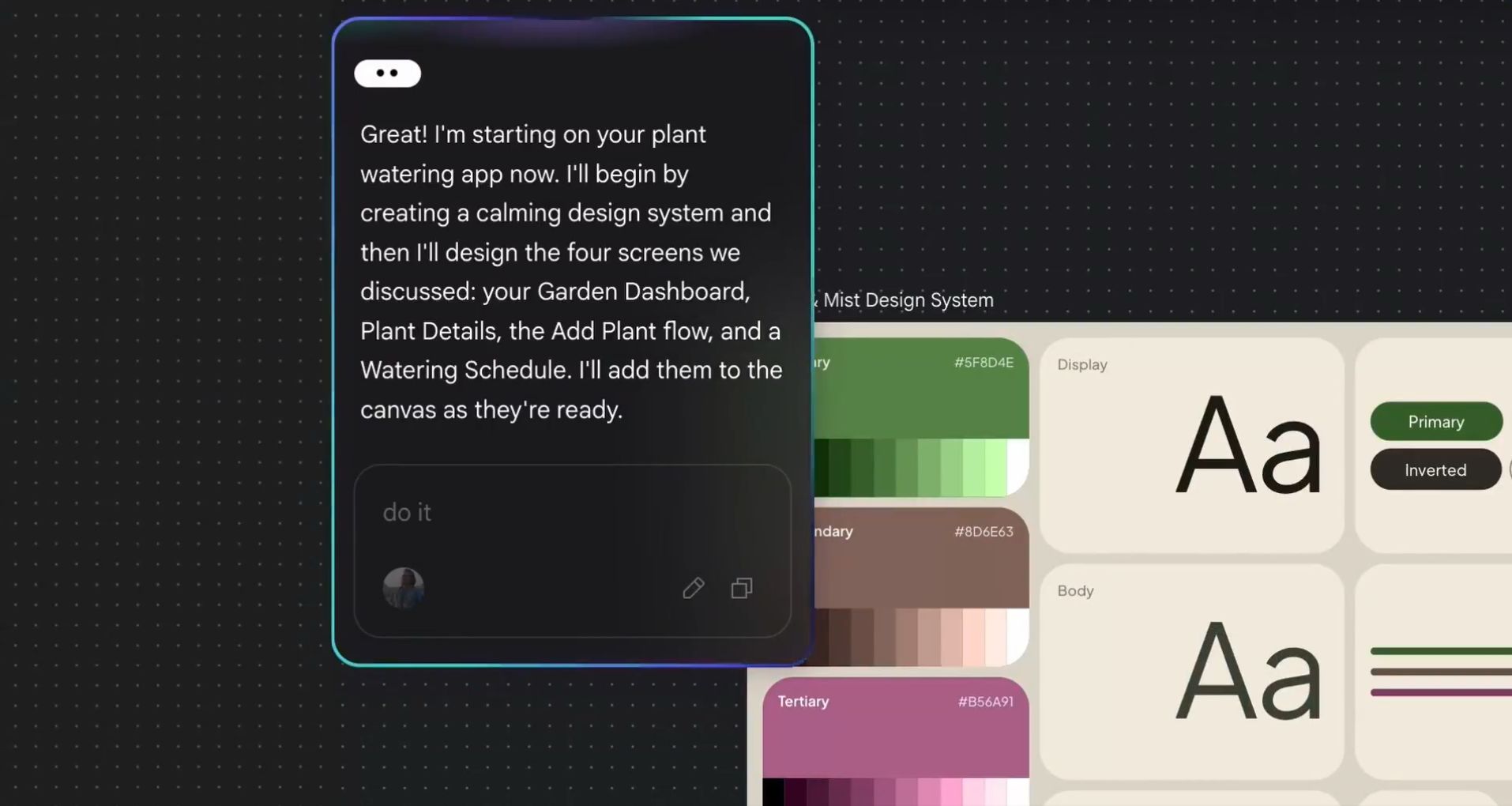

Here's what's new. An infinite canvas where you can drop in images, text, and code as context. A design agent that reasons across your entire project and works on multiple ideas at the same time. Voice mode where you talk to your canvas and it makes changes in real time. Say "give me three different menu options" or "show me this in different color palettes" and it just does it while you're talking.

You can now stitch screens together in seconds and hit Play to preview your interactive app flow instantly. The AI auto-generates logical next screens based on user clicks. So you're not just designing individual screens anymore. You're designing entire user journeys.

They also shipped DESIGN.md, a new agent-friendly markdown file that lets you export and import your design rules across tools. Extract a design system from any URL and apply it to a new project. No more starting from scratch every time.

And the developer handoff is real. MCP server and SDK integration means you can export directly into tools like AI Studio and Antigravity. Design to production in one pipeline.

Stitch is free and live right now at stitch.withgoogle.com for anyone 18 and older wherever Gemini is available.

Why This Is A Bigger Deal

Because Google just made "vibe designing" a real thing.

Up until now, the AI design space was split. You had tools that could generate a pretty mockup from a prompt. And you had tools where real design work actually happened. Figma for collaboration. Code editors for development. The gap between "AI generated this" and "a developer can build this" was still massive.

Stitch just closed that gap. You describe what you want your users to feel. The AI generates the interface. You talk to it and refine in real time. Then you export it straight to Figma or as production-ready code. No handoff friction. No recreating things from scratch in a different tool.

The voice mode is the part that changes everything. Designers don't want to type prompts while they're in a creative flow. They want to think out loud and see results. That's exactly what this does.

Turn AI Into Your Income Stream

The AI economy is booming, and smart entrepreneurs are already profiting. Subscribe to Mindstream and get instant access to 200+ proven strategies to monetize AI tools like ChatGPT, Midjourney, and more. From content creation to automation services, discover actionable ways to build your AI-powered income. No coding required, just practical strategies that work.

Industry Impact

Where This Is All Going

Stitch isn't competing with Figma. It's competing with the entire design workflow.

Right now most teams go through the same cycle. Brainstorm on a whiteboard. Wireframe in one tool. Design in another. Hand off to developers who rebuild everything from scratch in code. Every step is a translation layer and every translation layer loses something.

Stitch wants to kill all of that. You talk, it designs. You click Play, it prototypes. You export, it ships production code. One tool from idea to deployed app. And it's free.

Google already has Antigravity for AI-native coding. They have Gemini in Chrome for browser agents. Now they have Stitch for design. Connect those three and you're looking at a world where one person can concept, design, build, and ship a full product without ever opening a second app.

That's not a design tool. That's a company killer.

Google Deepmind CEO - Demis Hassabis

Also Today

Anthropic published the largest qualitative AI study ever. 81,000 Claude users across 159 countries and 70 languages shared how they use AI, what they hope for, and what scares them.

Anthropic’s Survey Findings Announced Via X

The biggest finding? Hope and fear aren't two camps. They live inside the same person. One user got a correct medical diagnosis after 9 years of being misdiagnosed. Another got laid off because their company replaced them with AI. Independent workers are seeing real financial gains at 3x the rate of traditional employees. But the people most exposed to AI disruption are older, female, more educated, and higher paid.

No country dipped below 60% positive sentiment. But lower income countries were the most optimistic. The people with the least are hoping the most.

Meme Of The Day 😂

How designers feel every couple of days.

That cycle of "It's over" to "We're so back" is literally what happens every time Google or Figma ships an AI update. Stitch just pushed everyone back to "We're so back" again.

What's The Recap

Google rebuilt Stitch from the ground up. Voice controls, infinite canvas, instant prototyping, and a design agent that works alongside you. Free for everyone. Anthropic asked 81,000 people what they hope and fear about AI. Turns out it's the same people feeling both. Google is making it easier to build. Anthropic is making sure we don't forget what we're building toward.