What Just Happened

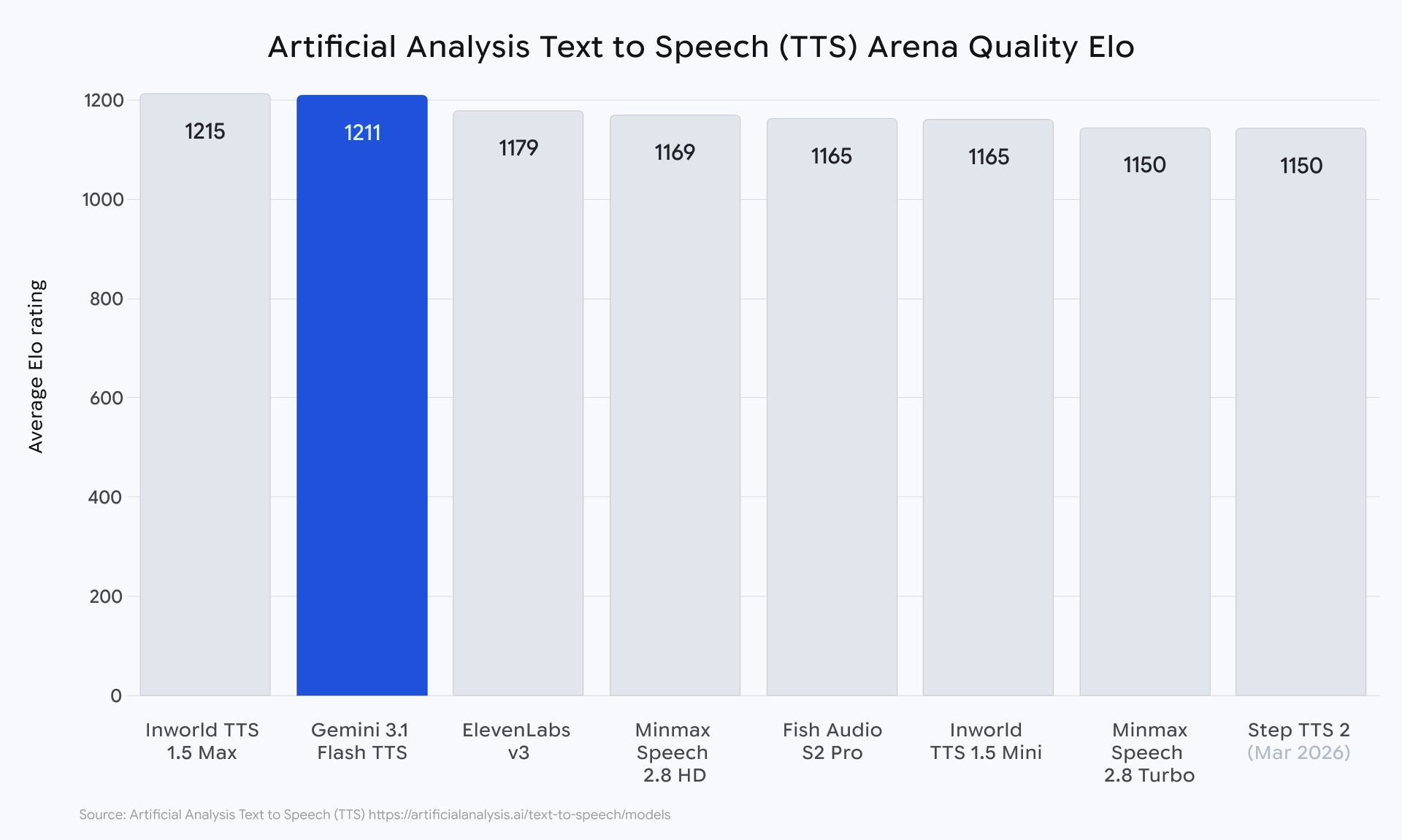

Google just dropped Gemini 3.1 Flash TTS Preview, its new low-latency text-to-speech model built for more natural, steerable, and expressive AI voice output. Google says the model introduces expressive audio tags for narration control and improves naturalness, controllability, and multilinguality.

This matters because Gemini is clearly turning into more than a text model family.

Google is now building a much fuller voice stack around it.

ARTIFICIAL INTELLIGENCE

🌎 Google Keeps Expanding Gemini Into Audio

This is not just a random TTS update.

Google’s official Gemini pages now position Gemini 3.1 Flash TTS alongside Gemini 3.1 Flash Live, which powers real-time voice interactions, and the broader Gemini 3.1 lineup. That tells you something pretty clearly: voice is no longer a side feature. It is becoming a real product layer inside Gemini.

And the timing is important.

Just a few weeks ago, Google expanded Search Live globally using Gemini 3.1 Flash Live, framing that model as the thing making voice conversations with Search more natural and multilingual across 200-plus countries and territories. Now it is following that with a dedicated TTS release. That is not a one-off. That is a roadmap.

🧠 What’s Actually New?

The simple version is this:

Google wants Gemini to sound better, feel more controllable, and become easier to build voice products on top of.

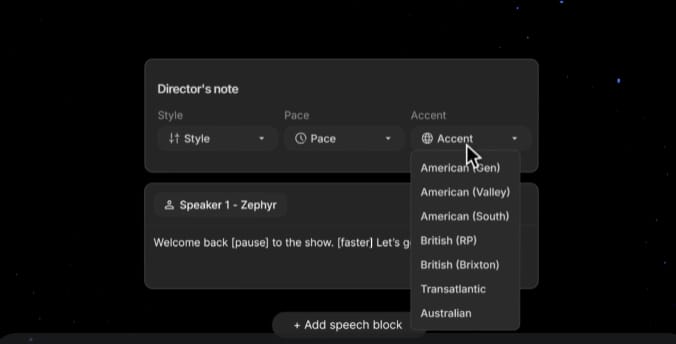

According to Google’s model docs, gemini-3.1-flash-tts-preview supports text input and audio output, with an 8,192 input token limit and 16,384 output token limit. Google describes it as a powerful, low-latency speech generation model with natural outputs, steerable prompts, and new expressive tags for precise narration control.

That last part is the real product story.

This is not just “AI reads text aloud.” It is Google trying to make voice generation feel more directed and usable, which matters a lot for assistants, demos, narration, customer support, content creation, and any product where robotic audio instantly kills the experience.

Benchmarks Via Google Deepmind

Try A FREE AI Browser!

Your browser should think and act. Norton Neo does.

Right now, getting answers online means juggling tabs, copying text into a separate AI tool, losing your place, and starting over. Norton Neo is the first safe AI-native browser built by Norton, and it cuts all of that out. Hover any link for an instant summary without opening a new tab. Search every tab, chat, and bookmark from one place. Write with AI built right into whatever page you're on.

No external tools. No broken flow. Every action protected by built-in VPN and ad blocking, all running quietly in the background while you work.

Fast. Safe. Intelligent. That's Neo.

Industry Impact

Why This Matters

Voice is becoming one of the most important surfaces in AI.

Text is still the default for power users, but for mainstream products, voice often feels faster, more natural, and more ambient. If Google can own more of that stack, from real-time voice interaction to expressive speech generation, it gets closer to controlling the full loop: listen, reason, respond, speak.

That is why this release matters beyond the model card.

It suggests Google is not just trying to win on chat or benchmarks. It is trying to turn Gemini into an end-to-end voice platform for developers and products.

This is probably a normal persons depiction of what Voice AI would be if pictured (Source: ADM + S)

⚡ The Vibe Check

The vibe is pretty simple.

Google keeps quietly making Gemini harder to ignore.

A few weeks ago it expanded Gemini-powered voice search globally. Now it is dropping a dedicated expressive TTS model. Taken together, that looks a lot less like a scattered set of launches and a lot more like a coordinated push into AI audio.

This is how platform plays usually happen.

Not with one giant reveal, but by steadily filling in every layer of the stack.

Google Deepmind CEO - Demis Hassabis

Also Today

Subagents have arrived in Gemini CLI.

Google says Gemini CLI can now delegate work to specialized expert subagents that operate in their own separate context windows, with their own instructions and tool access. The point is to keep the main session focused while repetitive, complex, or high-volume work gets handed off to smaller agents in parallel. Google also says developers can explicitly call them using @agent syntax, view them with /agents, and customize them with Markdown files.

That matters because this is the clearest sign yet that Gemini CLI is moving from “one helpful agent in your terminal” to something closer to a multi-agent workspace. Once one agent can spawn a small team around a task, the product starts feeling less like a coding assistant and more like an orchestration layer.

What’s The Recap?

Google just launched Gemini 3.1 Flash TTS Preview, a new expressive text-to-speech model built for more natural, steerable voice generation across 70+ languages. That is a meaningful signal that Gemini is expanding beyond text and becoming a much fuller audio stack.

At the same time, Google added subagents to Gemini CLI, showing the same broader pattern on the developer side. The Gemini story right now is not just about smarter models. It is about building out the layers around them, from voice to multi-agent workflows, until the whole stack starts to feel complete.

Quick Links:

Gemini 3.1 Flash TTS Drop 👉 Here

Subagents In Gemini CLI 👉 Here

Stay building. 🤖