What Just Happened

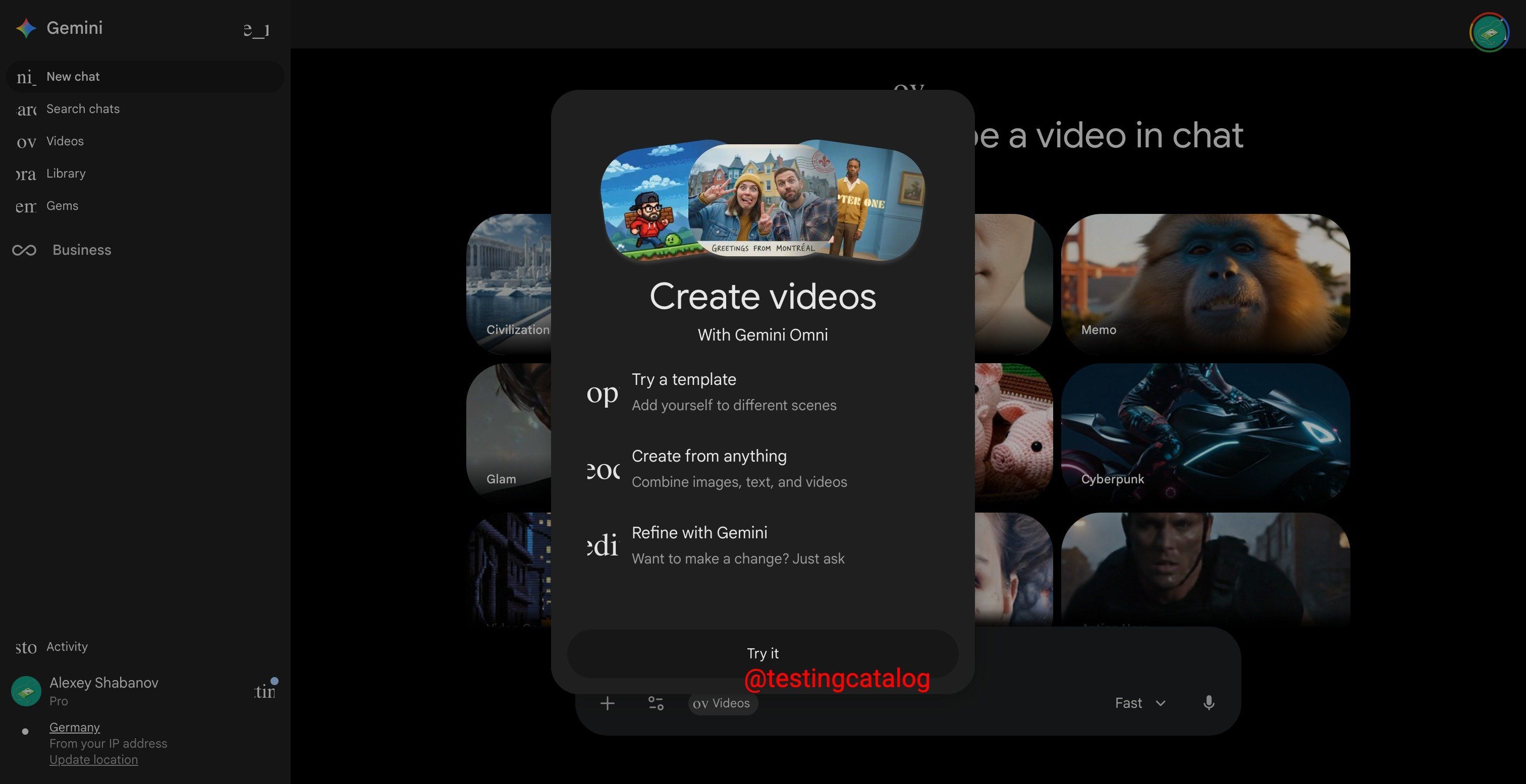

Google I/O 2026 opens Monday, May 19. And in the run-up to one of the most anticipated Google events in years, a new AI video model has leaked inside the Gemini app. The model is called Omni. It surfaced when select Gemini users opened the app and were greeted with a card reading "Create with Gemini Omni: meet our new video model. Remix your videos, edit directly in chat, try a template, and more." That string appeared in the production Gemini interface, not in hidden developer code. It is verified by Android Authority, 9to5Google, TestingCatalog, and Programming Insider. The model is real. The capabilities are real. And the timing is significant. Three weeks ago OpenAI shut down Sora, removing the most recognizable consumer AI video product from the market and leaving a category-defining opening. Google appears ready to fill it. Monday's keynote at Shoreline Amphitheater is going to be one of the most consequential AI product launches of the year. Omni is the story you should be tracking.

ARTIFICIAL INTELLIGENCE

🌎 What The Leak Actually Shows

Here is what verified reporting has confirmed about Gemini Omni.

The discovery. An X user named Thomas16937378 first spotted a UI string inside Gemini's video generation tab that read "Start with an idea or try a template. Powered by Omni." TestingCatalog, the most reliable Google AI leak tracker, picked it up immediately. Within hours the AI community was dissecting every frame.

The implied capability. The name Omni is itself the signal. Google currently runs a fragmented AI stack. Veo 3.1 handles video. Nano Banana 2 and Nano Banana Pro handle images. Standard Gemini handles text. Each lives in a different execution path. An "Omni" model would unify all three modalities under one system. Native text, image, and video generation in a single model. That would leapfrog every current frontier offering. Seedance 2.0 is video-only. Kling 3.0 is video-only. Even OpenAI's GPT-4o keeps image generation on a separate execution path from video. A true omni-model changes how creators prompt entirely.

The demos that have surfaced. A Reddit user who got early access ran a math test. They prompted the model to generate a professor writing a trigonometric identities proof on a chalkboard. The output was not perfect but the equations rendered correctly. Text inside generated video is the single hardest problem in AI video and Omni handled it. A separate dinner scene prompt was less successful, with objects materializing from thin air. The model appears limited to 10-second clips for now. One user reported that two video generations consumed most of their daily AI Pro quota.

Trigonometric identities - Generated by Gemini Omni

The metadata. A user found what appears to be the model ID buried in Gemini's code: bard_eac_video_generation_omni. It sits next to Toucan, the internal codename for Google's current Veo 3.1-powered video pathway. That positioning suggests Omni is either replacing Veo or operating alongside it as the higher-tier model.

What is not confirmed. Google has not officially announced Omni. The exact capabilities, pricing, and launch date are speculation. The strongest evidence points to a reveal at Google I/O on Monday or Tuesday next week.

🧠 What Does This Mean For AI Video?

Because OpenAI shut down Sora on April 26 and the AI video market has been without a clear consumer leader since.

For two years Sora was the AI video product that defined the category for mainstream users. It was the model people referenced when they imagined what AI video could do. It was the headline competitor in every AI video conversation. OpenAI pulled it three weeks ago. The reasons were not made fully public, but content moderation challenges, compute costs, and reputational risk from generated content all factored in.

RIP Sora 😢

The result is a category opening. Seedance 2.0 from ByteDance leads on technical benchmarks but is not a consumer product. Kling 3.0 is strong but China-based and not the default for Western users. Runway Gen-4 is professional creative tool, not consumer destination. There is no clear answer to the question "what should I use to generate AI video right now" for the average user.

Google sees the opening. Omni is positioned to fill it. If Google launches an omni-model that handles text, image, and video generation in one unified system, distributed through Gemini's existing user base across phones, Chrome, laptops, and cars, they capture the category that Sora vacated.

The timing is not accidental. The Sora shutdown happened three weeks ago. The Omni leak happened two weeks ago. Google I/O is in four days. The product roadmap that gets revealed on Monday was almost certainly accelerated to take advantage of the moment.

Why does every QBR sound like it took an hour to prep?

The strategic-account QBR has a different feeling. The CSM walks in knowing the buying committee, usage trends, support history, news on the company. They've blocked an hour to prep. The customer feels seen.

The other 190 QBRs don't get that hour. The CSM scans the dashboard five minutes before the call. They wing it. The customer answers the same baseline questions for the third time this year.

What if every QBR was a strategic-account QBR? Two minutes before the call, your CSM has the full brief in Slack: usage trends, support history, NPS, news on the company, what their champion just posted on LinkedIn.

Every customer feels like your top customer. Even when there are 200 of them.

3,000+ tools connected. SOC 2 certified. Your data never trains models.

"It was almost instantly adopted by the bulk of my team." Boris Wexler, CEO, Space Dinosaurs

Google IO

What To Watch For At Google I/O Monday

Google I/O 2026 opens May 19. The keynote is the centerpiece. Here is what to actually pay attention to.

Does Omni launch publicly or remain a preview? Google can announce Omni without immediately shipping it. A "coming soon" announcement gets the buzz without the operational risk. A public launch with API access gets the developer momentum.

Pricing and quota structure. The early access user reports said two generations consumed most of an AI Pro daily quota. That suggests Omni is expensive to run. The pricing tier Google reveals will tell us how confident they are in their cost structure.

Modality unification. The biggest question is whether Omni is truly omni or whether it is a video model with the marketing name. If text, image, and video genuinely come from one model, this changes the entire AI creative workflow.

Veo integration. Veo 3.1 is the current Gemini video model. If Omni replaces Veo, that is one story. If Omni operates alongside Veo as a higher tier, that is a different story. The split will tell us how confident Google is in Omni's quality.

Distribution channels. Will Omni land in Gemini consumer apps, in Vertex AI for developers, in Google Workspace for enterprise, or all three at once. Each channel tells you a different story about who Google is targeting first.

⚡ What Does The Future of AI Video Entail?

If you create content, AI video is about to get significantly more accessible. If Omni ships at scale, generating 10-second video clips for social posts, ads, internal communications, and creative projects becomes a one-prompt task inside an app you already use.

If you build AI products, the platform economics are about to shift again. Generating high quality video used to require expensive specialized tools. If Google bundles it into Gemini Pro pricing, the cost of including video generation in your product collapses.

If you work in marketing or advertising, the implications are large. Personalized video at scale becomes feasible. A single creative brief generates dozens of variants targeted at different audiences. The production cost structure of video advertising changes fundamentally.

If you watch the AI race, Monday is one of the biggest single days of the year. Google I/O has historically been where Google announces its biggest AI moves. This year the stakes are higher because Anthropic just hit $900 billion in valuation, OpenAI is preparing an IPO, and the consumer AI race needs a new chapter. Omni could be that chapter for Google. If it ships well, the narrative around Google catching up in AI shifts to Google leading.

What’s The Recap?

A new Google AI video model called Omni has leaked inside the Gemini app ahead of Google I/O 2026 next week. Verified by Android Authority, 9to5Google, and TestingCatalog. The model name suggests a unified omni-model handling text, image, and video in one system, which would leapfrog every current frontier AI video offering. Early demos show 10-second clips with strong text rendering and chat-based editing. A math equation test rendered correctly which is the hardest single problem in AI video. The timing is significant. OpenAI shut down Sora on April 26 leaving a category opening. Google I/O opens Monday May 19 at Shoreline Amphitheater. The keynote is going to be one of the most consequential AI product launches of the year. Whether Omni lives up to the leak or undersells against the hype, the next four days will define how the consumer AI video category looks for the rest of 2026.

Stay building. 🤖