What Just Happened

Today Google’s DeepMind team officially launched Gemma 4, a new family of open models designed to push open-source AI to the next level.

Gemma 4 is fully released under an Apache 2.0 license, meaning developers and companies can use and modify the models for commercial and open projects without the restrictions that held back previous Gemma generations.

The family spans four sizes from edge-friendly tiny models to large powerful variants and is built on the same research foundations as Google’s Gemini 3 systems, bringing strong reasoning, coding, and agentic capabilities to hardware you can actually run AI on.

ARTIFICIAL INTELLIGENCE

🌎 What This Release Actually Means

This isn’t just a new “open-weight model drop.”

Gemma 4 represents Google’s largest public push yet into truly open-model AI, models that businesses, researchers, and developers can run locally, customize deeply, and integrate into apps without cloud dependency or restrictive terms.

Here’s what stands out:

Full Apache 2.0 licensing makes it one of the most permissive major open model releases ever.

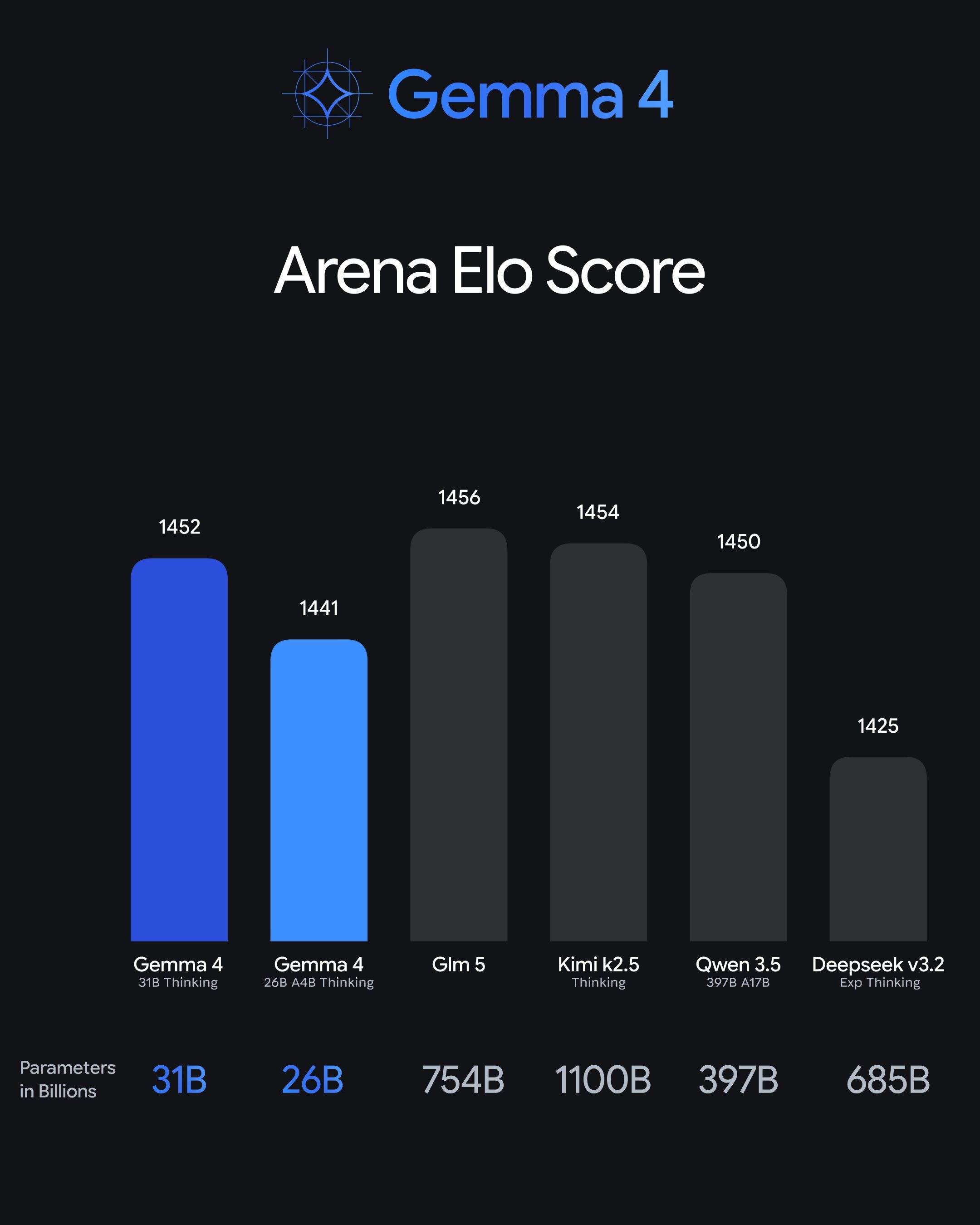

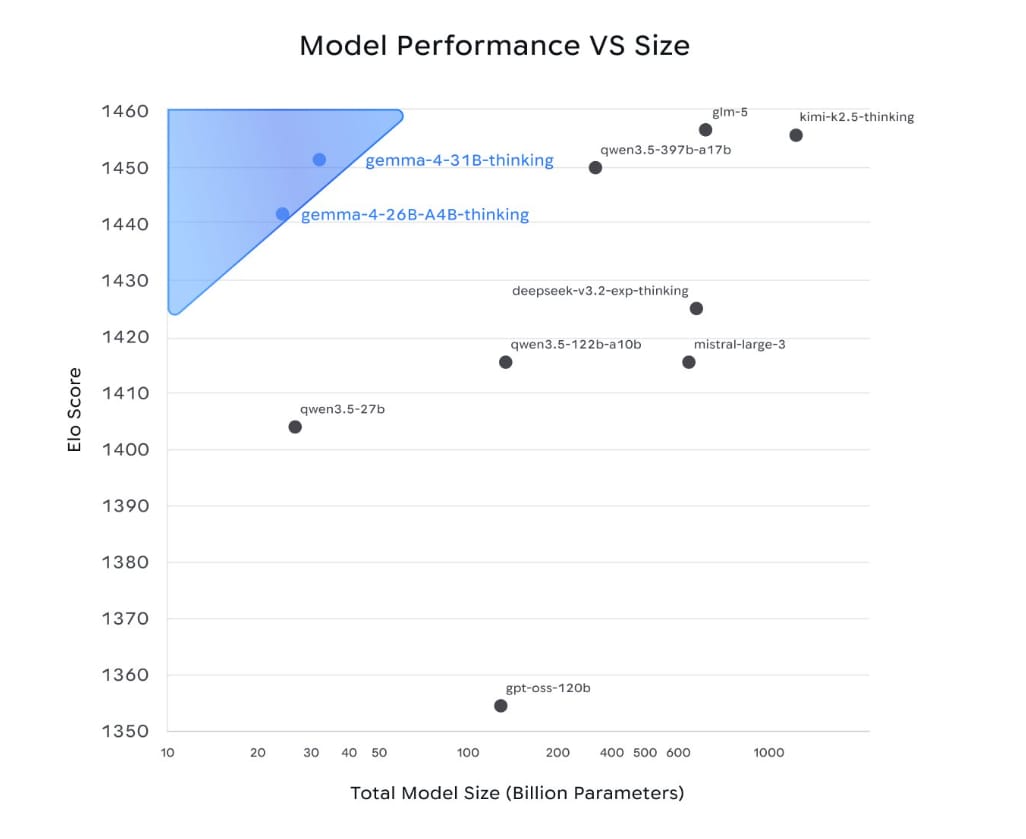

4 model sizes let you choose the right balance of performance and efficiency — from tiny edge models to 31 B dense models ranked top‑3 among open models globally on Arena benchmarks.

Agentic workflows and function calling are built in, not bolted on — making Gemma 4 usable as both a reasoning engine and a local agent platform.

Support for multimodal input (text, images, audio) and massive context windows up to 256 K tokens give it utility across workflows from coding to analysis.

shared.image.missing_image

This is Google’s clearest statement yet that open‑weight AI is not a side project — it’s a strategic front.

🧠 Why It Matters Right Now

For years, the narrative around large language models was dominated by massive cloud‑only systems that you couldn’t run locally without huge infrastructure. Gemma 4 flips that script.

Today’s release means:

Developers can build powerful AI experiences offline or on‑device from phones and Raspberry Pi to desktop GPUs.

Enterprises can deploy advanced reasoning and automation without sending every prompt to the cloud.

Open models now compete on capability, not just accessibility challenging closed models and Chinese open‑weight competitors with something equally capable and truly open.

This matters for autonomy, privacy, cost, and speed. It’s a foundational release — not just a model revision.

Create Income With AI!

Turn AI into Your Income Engine

Ready to transform artificial intelligence from a buzzword into your personal revenue generator?

HubSpot’s groundbreaking guide "200+ AI-Powered Income Ideas" is your gateway to financial innovation in the digital age.

Inside you'll discover:

A curated collection of 200+ profitable opportunities spanning content creation, e-commerce, gaming, and emerging digital markets—each vetted for real-world potential

Step-by-step implementation guides designed for beginners, making AI accessible regardless of your technical background

Cutting-edge strategies aligned with current market trends, ensuring your ventures stay ahead of the curve

Download your guide today and unlock a future where artificial intelligence powers your success. Your next income stream is waiting.

Industry Impact

🧠 Why It Matters Right Now

For years, the narrative around large language models was dominated by massive cloud‑only systems that you couldn’t run locally without huge infrastructure. Gemma 4 flips that script.

Today’s release means:

Developers can build powerful AI experiences offline or on‑device from phones and Raspberry Pi to desktop GPUs.

Enterprises can deploy advanced reasoning and automation without sending every prompt to the cloud.

Open models now compete on capability, not just accessibility challenging closed models and Chinese open‑weight competitors with something equally capable and truly open.

This matters for autonomy, privacy, cost, and speed. It’s a foundational release — not just a model revision.

⚡ The Vibe Check

This is a high‑stakes moment in the open AI world.

Gemma 4 ships at a time when open models are no longer an afterthought. They are now critical infrastructure for developers, products, and companies who want control over how and where AI runs.

By pairing open licensing with agent‑ready capabilities, Google just made it significantly easier for builders to go from prototype to production without cloud costs killing the budget.

Model Performance Vs Size Graph Via Google Deepmind

Whether you’re on a laptop, phone, or server rack, today’s drop means AI that runs anywhere, without lock‑in.

Also Today

Hardware partners are racing to support Gemma 4. NVIDIA says it’s accelerating the new models across RTX PCs, DGX Spark, and edge devices, optimized for both inference speed and agentic use cases.

And on the edge side, Google is launching tools and libraries to run Gemma 4 efficiently on everything from Android phones to IoT modules broadening where advanced AI can actually run.

Taken together, this means the open AI ecosystem is finally shifting from a cloud‑only mentality to a truly distributed AI era.

THE BIG PICTURE

Google has officially launched Gemma 4, a family of open‑weight models under the Apache 2.0 license that bring frontier‑level reasoning, agentic workflows, coding, and multimodal capabilities to hardware from phones to workstations.

This release marks a concrete inflection point: open models are now powerful, permissively licensed, and capable of running offline or on‑device something that changes what teams can build without cloud dependency.

Stay building. 🤖